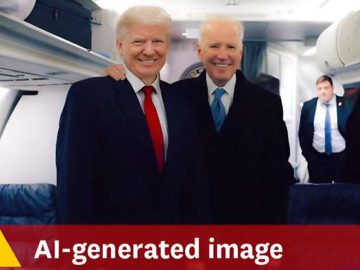

BOSTON ― Concern over the possible use of computer-generated images and sound recordings that could confuse and alienate voters prompted Massachusetts lawmakers to consider a bill Tuesday that would require any AI-generated campaign material to carry a warning label.

The bill, proposed by Sen. Barry Finegold, D-Andover, and Rep. Frank Moran, D-Lawrence, would ban the use of synthetic media in the 90 days preceding an election without disclosing that the material had been manipulated or generated by artificial intelligence.

The bill would also ban the deliberate manipulation of material with the intent of confusing or deceiving voters by presenting a political candidate or party in ways contrary to their stated platforms and policies.

The legislation also laid out consequences for violators: the ability to file a civil suit, penalties of up to $10,000 per incident and “any other relief the court deems proper.”

Tech companies back bill, want protections for AI makers

Christopher Gilrein, executive director of TechNet, the organization that calls itself the voice of innovation technology, said companies were fully in support of the bill.

Prep for the polls: See who is running for president and compare where they stand on key issues in our Voter Guide

“But we want it to look like the bills passed in the other states that have filed similar legislation,” Gilrein said.

Speaking in front of the Joint Committee on Election Laws, Gilrein requested that liability be ascribed only to the creator of the material, not the tools and program used, and not the platforms that disseminate the material.

“Artificial intelligence has the potential to solve our most pressing issues. We support clear disclosure when AI is used in communications,” Gilrein said. He also requested the bill contain allowances for programmers to investigate the material to determine who made it and what tools were used.

Gilrein cited the recent use of a deepfake in the New Hampshire primaries: a robocall using a facsimile of President Joe Biden’s voice, which inaccurately suggested that if voters cast ballots in the January primary, they could be precluded from voting in November. The call went out on a Sunday evening, just two days before the Tuesday primary.

Biden was not on the ballot in New Hampshire but won the primary based on write-in votes. Investigators learned the fake call had been created by a political consultant who claimed it was intended to be a wake-up call regarding the proliferation of AI-generated campaign material and the potential for its misuse.

Use of deepfakes to influence voter choice could result in loss of trust in the American electoral system, according to Hamid Ekbia, a Syracuse University professor speaking for Academic Alliance for AI Policy, a coalition of 40 major universities. The social and political implications, Ekbia said, include increased polarization and negative partisanship across the political spectrum.

13 states have already passed laws

According to Public Citizen, a national advocacy organization, 13 states have already passed laws regulating AI.

“The federal government has been slow to act,” said Craig Holman, the organization’s lobbyist. “It’s up to the states to step up.”

He described the 2024 election cycle as the first where AI could have a deep impact on campaigns and voter choice.

“It’s almost impossible to tell the difference between what’s real and what has been computer-generated,” Holman said.

He mentioned the 2023 mayoral campaign in Chicago, during which a widely disseminated deepfake appeared to record a candidate condoning police violence. The candidate, Paul Vallas, ultimately finished second in a close race.

The bill offers protections for traditional and social media platforms. A news outlet would be allowed to use the manipulated material as part of a news story as long as the outlet makes clear that the material is false.

Also, news outlets would not liable for prosecution if they make an effort to ascertain the veracity of the material before using it in a story or broadcast. If they knowingly broadcast the fake material, they must alert the public, prominently, that it does not reflect the speech or conduct of the candidate depicted.