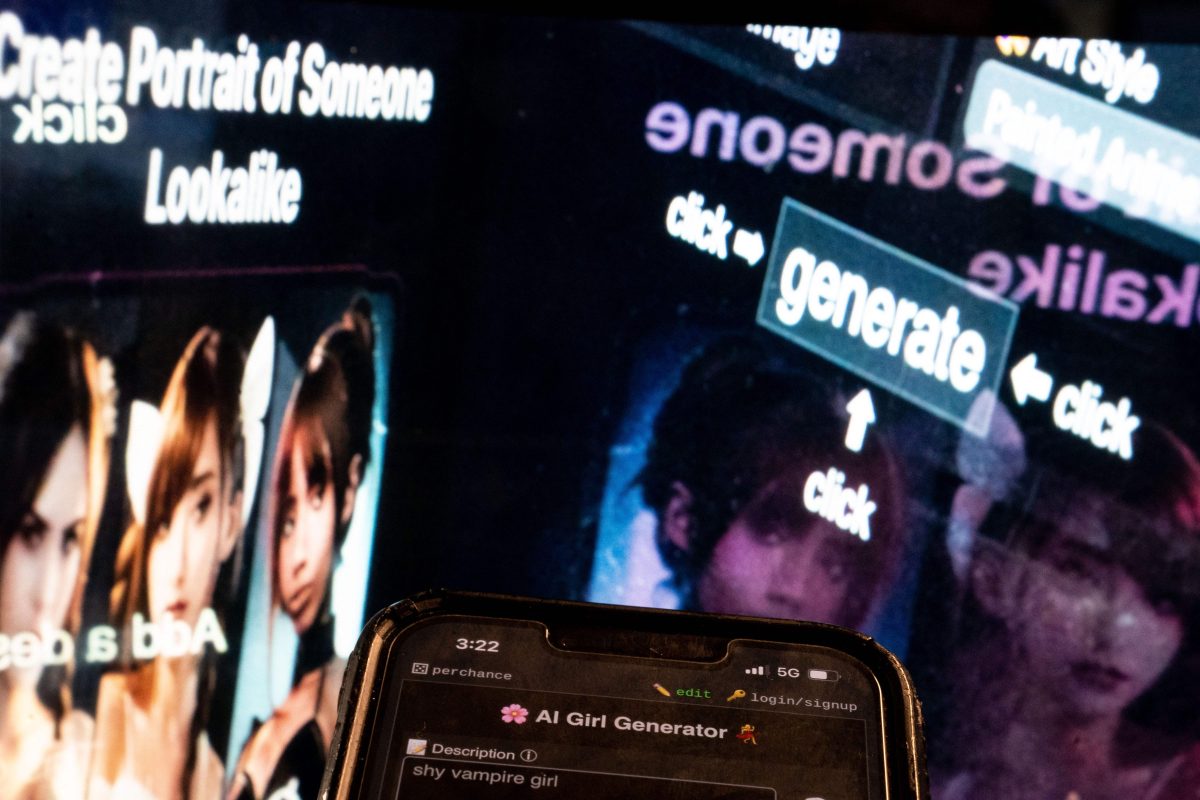

(The Hill) — Students are quickly learning the ease with which AI can create nefarious content, opening up a new world of bullying that neither schools nor the law are fully prepared for.

Educators are watching in horror as deepfake sexual images are created of their students, with sham voice recordings and videos also posing a looming threat.

Advocates are sounding the alarm on the potential damage — and on gaps in both the law and school policies.

“We need to keep up and we have a responsibility as folks who are supporting educators and supporting parents and families as well as the students themselves to help them understand the complexity of handling these situations, so that they understand the context, they can learn to empathize and make ethical decisions about the use and application of these AI systems and tools,” said Pati Ruiz, senior director of education technology and emerging tech at Digital Promise.

One Tech Tip: How to spot AI-generated deepfake images

At Westfield High School in New Jersey last year, teen boys used AI to create sexually explicit images of female classmates.

“In this situation, there was some boys or a boy — that’s to be determined — who created, without the consent of the girls, inappropriate images,” Dorota Mani, the mother of one of the girls at the school who was targeted, told CNN at the time.

And in Pennsylvania, a mother allegedly created AI images of her daughter’s cheerleading rivals naked and drinking at a party before sending them to their coach, the BBC reported.

“The challenge at the time was that the district had to suspend [a student] because they really did think it was her — that she was naked and apparently smoking marijuana,” said Claudio Cerullo, founder of TeachAntiBullying.org.

Schools are having difficulties responding to these new, vicious uses of AI: The facility in Pennsylvania was unable to determine the images were fake on their own and had to involve police.

Even experts in the field are just starting to wrap their heads around AI’s destructive power in the schoolyard or the locker room.

Cerullo also serves on Vice President Kamala Harris’s taskforce on cyberbullying and harassment, and he said the group has been discussing the increased risk of suicide in teens from cyberbullying and what policies need to be developed.

They are “looking at procedures, working with local and state law enforcement officials when it comes to identifying AI standards and needs,” Cerullo said.

As it stands, even law enforcement has yet to find a clear path forward.

The Federal Trade Commission (FTC) proposed new protections against AI impersonations in February, looking to ban deepfakes completely, while Department of Justice created an “artificial intelligence officer” position to better understand the new technology.

Deepfake of principal’s voice is the latest case of AI being used for harm

Last month, a bipartisan group of lawmakers released a report, endorsed by Senate Majority Leader Chuck Schumer (D-N.Y.), about what Congress needs to address regarding AI, including deepfakes.

“This is sexual violence,” Rep. Alexandria Ocasio-Cortez (D-N.Y.) said in a video last week promoting legislation to tackle deepfake pornography.

“And what is even crazier is that right now there are no federal protections for any person, regardless of your gender, if you’re a victim of nonconsensual deepfake pornography,” added Ocasio-Cortez, who said she has personally been a victim of such deepfakes.

The bipartisan Defiance Act she endorsed was introduced in both chambers in March. It would create a federal civil right of action for victims of nonconsensual AI porn so they can seek redress in court.

And while discussions have been had about what legal repercussions should be faced by those who use AI to harm others, the issue becomes even more dicey when discussing minors.

“The way that we address it might need to go beyond enforcement, because it might not be palatable for us to say, well, you know, we’re going to ruin a bunch of these kids’ lives for what might actually just be making a dumb mistake and experimenting,” said Alex Kotran, co-founder and CEO of the AI Education Project. “

“If the threshold for distributing, creating and distributing child pornography is lowered from finding a minor, taking photos of that person, uploading those photos, sharing it on like your network — if it’s just a matter of like typing in a single text prompt or uploading a single thing, single image, it starts to feel like something that kids might do without realizing the enormity and the gravity of what they’re doing. And that’s why I think, in addition to laws, we have to be able to set clear norms as a society,” Kotran said.

And when trying to ensure students aren’t using AI for hostile purposes, concerns about over-surveillance have been raised.

“I think where we are here is really thinking about the ethics and emphasizing the need to be responsible with our use of these technologies and also protecting student and community data as well as privacy while managing the cyberbullying risks that arise with this new technology,” Ruiz said.

While deepfake pictures have made the most headlines, they are far from the only risk AI poses for schools. An athletic director at Pikesville High School in Maryland created an AI fake voice recording of a principal to try to paint him as racist to get him fired.

From shrimp Jesus to fake self-portraits, AI-generated images have become the latest form of social media spam

And deepfake videos will only become more accessible and realistic.

“I think treating this as a problem specific to child pornography or deepfake nudes is actually missing the forest for the trees. I think those issues are where deepfakes really are coming to a head — it’s like the most visceral — but I think the bigger challenge is how do we build sort of like the next iteration of digital literacy and digital citizenship with a generation of students that is going to have at their disposal these really powerful tools,” Kotran said.

He raised concerns that the technology could get to a point where students are afraid to share any pictures of themselves on the internet due to fear of it being used for deepfakes.

“We have to try to get ahead of that challenge because I think it’s really undermined kids mental well being and I see very few organizations or people that are really focusing in on that. And I just worry that this is no longer a future state, but very much like a clear and present danger that needs to be sort of taken ahead of,” Kotran said.