AI agents haven’t been around that long – mainstream generative

AI itself it less than four years old, as hard as that may seem to believe –

but the bulk of the IT world and the organizations big and small that serves IT

are all in. Adoption is growing, budgets are expanding, and more plans are

being put in place to do more with agents.

According to a survey of 300 senior executives by global

consultancy PcW, 88 percent plan to increase

their AI budgets because of the emergence of agentic AI – 71 percent said

they expect to grow those budgets by anywhere from 10 percent to more than 50

percent – 79 percent say they have already adopted AI agents, and, of those, 66

percent say agents are increasing productivity and delivering value.

And that’s against the backdrop of concerns that corporate

leaders have about the technology that, according to the Harvard Business

Review, range from data

issues to security to privacy.

The tech industry itself has essentially been remade to push

agentic technologies out to the waiting business world. Most IT vendors are

increasing their own spending to develop agentic tools for themselves and their

users and have built the agendas for their annual conferences around what

agents and supporting products they can offer.

That includes Google. At last week’s Google Cloud Next 2026

show in Las Vegas, Google chief executive officer Sundar Pichai appeared before

the keynote crowd via live video, promising that the hyperscaler is investing

huge amounts of money to support its agentic cloud ambitions. In 2022, Google

spent $31 billion in capital expenditures. The plan this year is for capex

investment to his $175 billion to $185 billion, with more than half of Google’s

machine learning compute going toward the cloud business.

Google Cloud has been putting together the makings of a full

agentic AI stack, and the results of that work were front and center at the

conference. Google Cloud chief executive officer Thomas Kurian said the

company’s innovation spree is keeping pace with the rapid demand for agentic AI

among developers and other customers, noting that almost 75 percent of Google

Cloud customers now use the company’s AI products.

“Just one year ago, we stood on this same stage and promised

a new future for AI,” Kurian said during his keynote. “Today, that future is

running in production at a scale that the world has never seen. Over the last

year, we didn’t just see adoption. We saw transformation. We have thousands of

agents and services across every industry, reaching billions of people through

the global scale of our partner network. You have moved beyond the pilot. The

experiment in phase is behind us, and now the real challenge begins.”

He added that organizations need a unified agentic stack to

move AI into production, saying that “you cannot deliver AI by piecing together

a puzzle piece or fragmented silicon and disconnected models. To drive real

value, you need an architecture where chips are designed for the models, models

are grounded in your data, agents and application are built with models and

secured by the infrastructure.”

Last week, we wrote about the pair

of eighth generation Tensor Processor Unit (TPU) compute engines that Google

will ship before the end of the year. That said, there was the expected

firehose of announcements, but their focus was on agentic AI, and key among

them were new and expanded capabilities for building and running agents as well

as ensuring the data they need is ready for them.

One step for Google Cloud was expanding its Vertex AI

development platform by adding a range of new capabilities that developers can

use to create agents that touch on such areas as agent orchestration and

integration, DevOps, and security. The agents then become available to

organizations’ employees via Google’s Gemini Enterprise app.

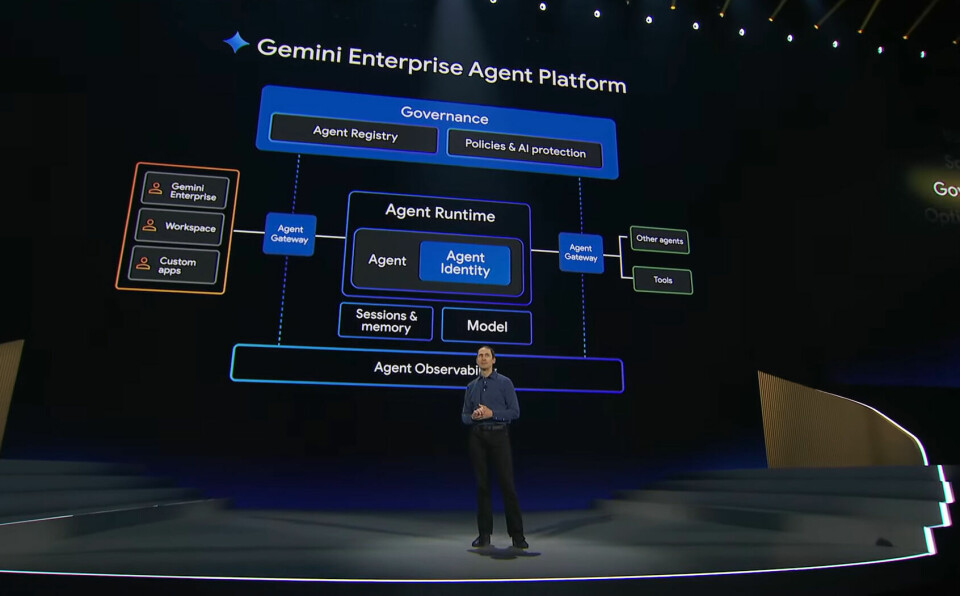

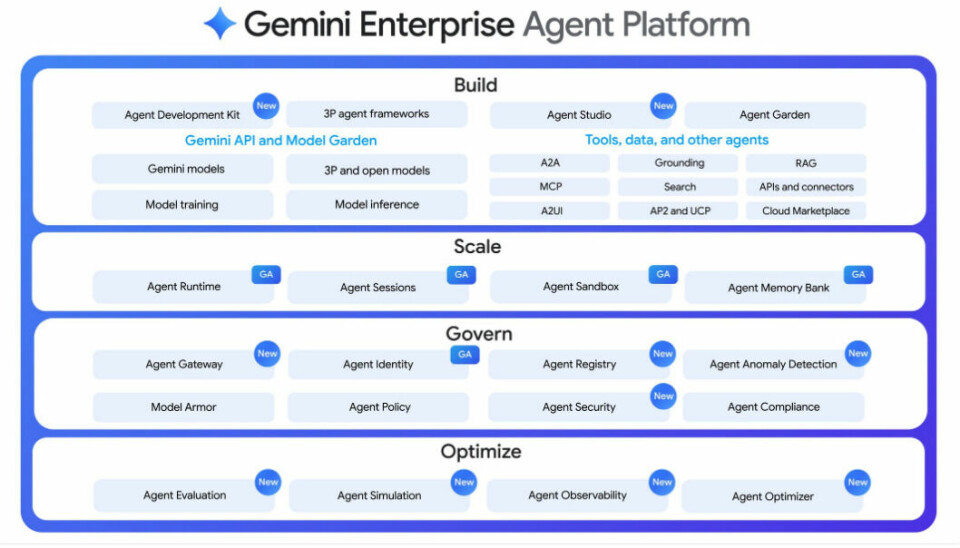

Through the Gemini Enterprise Agent Platform – the enhanced

and rebranded Vertex AI – developers have options for building agents, using

either the new Agent Studio, a low-code, visual interface, or an upgraded Agent

Development Kit open framework that includes AI-native coding to more quickly

create production-grade agents.

There’s a bulked-up Agent Runtime for supporting agents that

can run for days at a time and keep their context with persistent memory via

Memory Bank. The platform offers centralized control through Agent Identity,

Registry, and Gateway tools, which track identity and obeys guardrails, and

quality guarantees with Agent Simulation, Evaluation, and Observability

features that tracks agent execution and reasoning.

It also includes native integration with the Model Context

Protocol [MCP], an Anthropic created tool for making it easier for agents to

access external data sources and applications.

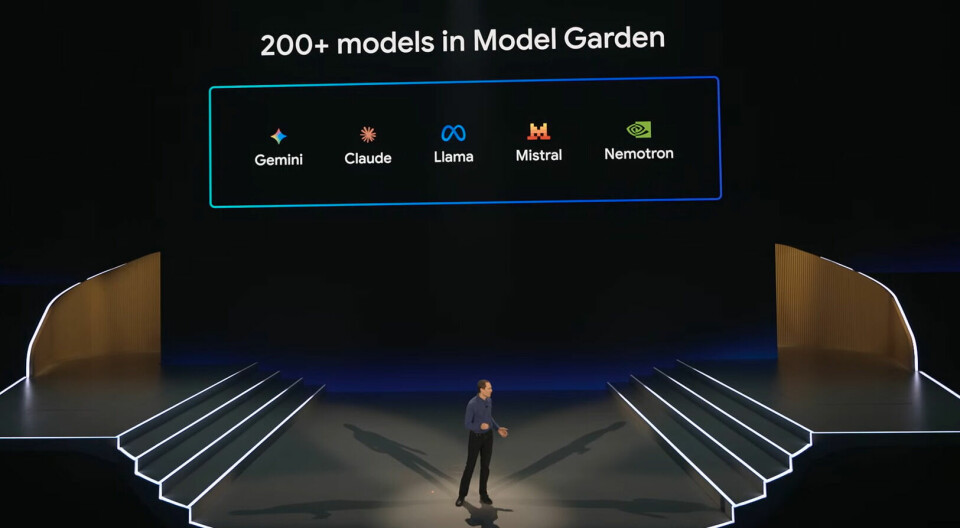

With the platform, development teams – through the

platform’s Model Garden – also get access to more than 200 AI models, including

Google’s latest Gemini 3.1 Pro, which is in preview and optimized for workflow

orchestration, as well as Gemini 3.1 Flash Image for visual assets and Lyria 3

for audio and music. There’s also support for models from other vendors,

including Anthropic’s

Claude, Meta

Platforms’ Llama, Mistral AI, and Nvida’s

Nemotron.

Google Cloud also is turned its attention to bringing data

storage and management into the agentic age.

“Reasoning without context is just a guess,” Karthik Narain,

chief product and business officer for Google Cloud, said on stage. “When you

expect your AI to make decisions and your agents to take actions, you cannot

afford to guess. Trusted context turns an intelligent guess to a decisive

action. We’re completely rethinking the data platform.”

The result of the rethinking it the Agentic Data Cloud, a

new umbrella offering that includes some of what the company was already doing

with a number of agent-focused tools and capabilities, allowing for agents to

interact with data they use to complete their tasks. Foundational to this is

what Google Cloud calls its cross-cloud lakehouse, which is designed to let

agents go and work on data where it resides, rather than make copies and bring

them back with them.

“The reality is data lives everywhere, at Google, at AWS,

Azure, and across your SaaS applications,” Narain said. “Your old lakehouse

expected the analytical engines and the data storage to reside in the same

cloud. This approach is broken. [The cross-cloud lakehouse] is completely

borderless. Instead of forcing you to accept complex networking [processes] or

massive egress fees, we deliver low latency, direct connectivity to AWS and

Azure, as if the data sat natively in Google Cloud. No more moving data, no

more vendor lock-in.”

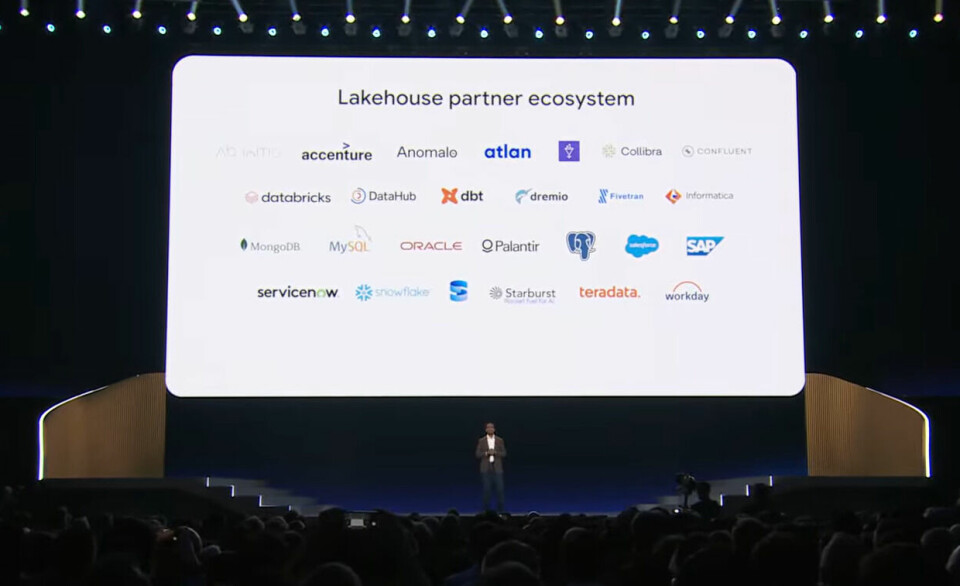

The cross-cloud lakehouse is enabled by the integration of the

Cross-Cloud Interconnect into the vendor’s data plane and is based on Apache’s

Iceberg for large-scale analytics. In addition, it includes interoperability

with BigQuery Apache Spark as well as OSS frameworks like Spark, Trino, and

Flink, as well as third-party engines like Databricks

and Snowflake. It’s akin to what Google has done with its open

lakehouse efforts.

Other highlights included Google Cloud’s Data Agent Kit,

which includes its Data Engineering Agent for building and transforming data

pipelines and enforcing governance rules to protect against bad data, Data

Science Agent for automatically scaling a model across BigQuery Dataframes and

Serverless Apache Spark, and Data Observability Agent for protecting the agent

infrastructure.

The cloud provider’s Dataplex Universal Catalog

grew up to become Knowledge Catalog, a universal context engine that integrates

with BigQuery to transform tables and metadata into unified business logic,

while its Smart Storage tool does the same with unstructured data.