Another AI level-up is here. And while this isn’t a new generative AI video model to rival the likes of Seedance 2.0 or other models, per se, this new technology from Adobe does aim to give creators in the AI space a whole host of new tools to better control their AI-generated video.

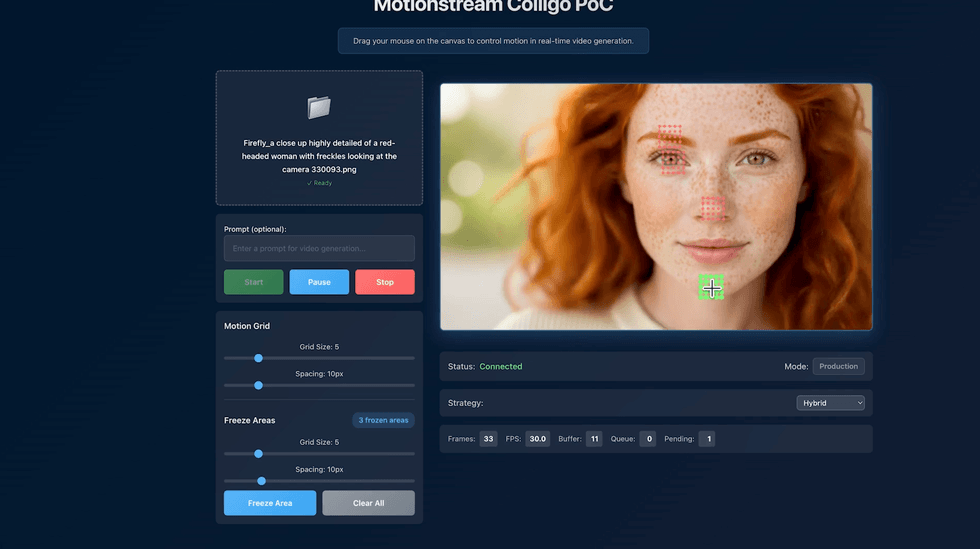

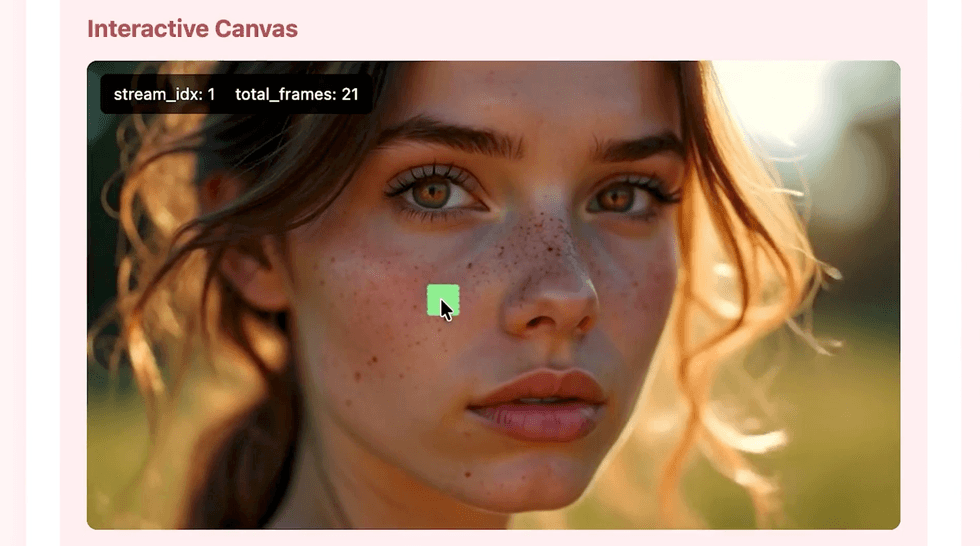

Called “MotionStream,” this new, experimental technology unveiled by Adobe lets creators interact with AI-generated video as it’s being created, enabling them to “direct” the movement of objects and perform intuitive controls like changing camera angles.

All of this is set to happen in real time, with cursors and sliders, and could be another level-up for AI video technology. If your interest (or existential crisis) is piqued, here’s what you need to know.

Adobe Unveils MotionStream

Credit: Adobe

Developed by Adobe researchers who have published their work on MotionStream and are now offering a preview to the public, this new technology isn’t too dissimilar from the tools or controls we’ve seen in other generative AI models and platforms like Runway’s Director Mode.

What is unique might simply be the performance and intuitiveness, which Adobe promises will be a “big change in how people could control video in the future,” according to Eli Shechtman, Senior Principal Scientist and one of the researchers behind MotionStream.

According to Adobe, the MotionStream experience will be quick and controllable, with natural movement built in. Most current generative AI tools require users to enter a text prompt, click around, and then wait tens of seconds, even a minute, for the tool to produce or edit a video clip. There’s a lot of sitting, waiting, and frustration.

The Adobe MotionStream Experience

Credit: Adobe

With this new technology, the company is hopeful that users will be able to interact with AI-generated video as it’s being created, allowing them to “direct” the movement of objects and change camera angles in real time with simple cursor and slider controls.

“That’s where a lot of the magic happens—in the secondary effects that are really hard to control manually. If you want to move an elephant, for example, you can click and move its body, but it’s a lot of work to manually make those movements look natural. This currently requires skills and specialized software to rig, and animate or keyframe the animation, following a process that typically takes hours, if not days depending on scope.Instead, the underlying video generator behind MotionStream is basically simulating the world in real time. So, the elephant’s legs move naturally, and the ears flap naturally as the elephant moves. The model provides you with knowledge about the world and you can interact with it.” – Eli Shechtman, Senior Principal Scientist and one of the researchers behind MotionStream.

Overall, with reduced latency and more control, this is one future for AI video that might be a bit more malleable and usable for filmmakers and video creatives. However, many of the same concerns surrounding generative AI will likely remain.

If you are intrigued or would like to morbidly know more, you can find more info on Adobe’s Research page here.

From Your Site Articles

Related Articles Around the Web