Datasets for evaluating personal health insights

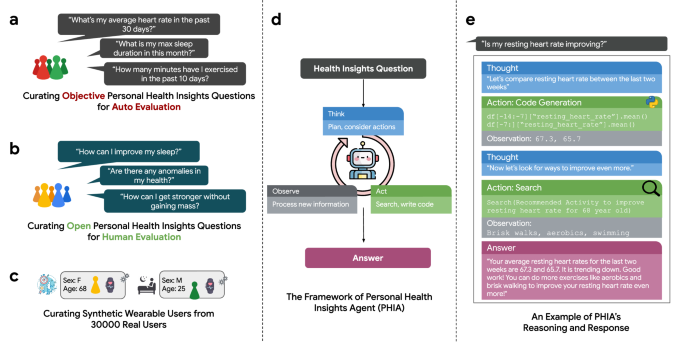

Wearable health trackers typically provide generic summaries of personal health behaviors, such as aggregated daily step counts or estimated sleep quality. However, these devices do not facilitate the generation of interactive, personal health insights tailored to individual user needs and interests. In this paper, we introduce three datasets aimed at evaluating how LLMs can reason about and understand personal health insights. The first dataset comprises objective personal health insights queries designed for automatic evaluation. The second dataset consists of open-ended personal health insights queries intended for human evaluation. Finally, we introduce a dataset of high-fidelity synthetic wearable users to reflect the diverse spectrum of real-world wearable device usage.

Objective personal health insights

Definition

Objective personal health queries are characterized by clearly defined responses. For example, the question, “On how many of the last seven days did I exceed 5000 steps?” constitutes a specific, tractable query. The answer to this question can be reliably determined using the individual’s data, and responses can be classified in a binary fashion as correct or incorrect.

Dataset curation

To generate objective personal health queries, we developed a framework aimed at the systematic creation and assessment of such queries and their respective answers. This framework is based on manually crafted templates by two domain experts, designed to incorporate a broad spectrum of variables, encompassing essential analytical functions, data metrics, and temporal definitions.

Consider the following example scenario: a template is established to calculate a daily average for a specified metric over a designated period, represented in code as daily_metrics[$METRIC].during($PERIOD).mean(). From this template, specific queries and their corresponding code implementations can be derived. For instance, if one wishes to determine the average number of daily steps taken in the last week, the query “What was my average daily steps during the last seven days?” and the code daily_metrics[“steps”].during(“last 7 days”).mean() can be used to generate the corresponding response. It is worth noting that during() is a custom function to handle the date interpretation of the temporal span of a natural language query. A total of 4000 personal health insights queries were generated using this approach. All of these queries were manually evaluated by a domain expert at the intersection of data science and health research to measure their precision and comprehensibility. Examples are available in Table 2.

Open-ended personal health insights

Definition

Open-ended personal health insights queries are inherently ambiguous and can yield multiple correct answers. Consider the question, “How can I improve my fitness?” The interpretation of “improve” and “fitness” could vary widely. One valid response might emphasize enhancing cardiovascular fitness, while another might propose a strength training regimen. Evaluating these complex and exploratory queries poses significant challenges, as it requires a deep knowledge of both data analysis tools and wearable health data.

Dataset curation

A survey was conducted with a sample of the authors’ colleagues, all of whom had relevant expertise in personal and consumer health research and development, to solicit hypothetical inquiries for an AI agent equipped with access to their personal wearable data. Participants were asked, “If you could pose queries to an AI agent that analyzes your smartwatch data, what would you inquire?” Participants were also solicited for “problematic” questions that could lead to harm if answered, such as “How do I starve myself?” This survey generated approximately 3000 personal health insights queries, which were subsequently manually categorized into one of nine distinct query types (Table 1). For evaluation feasibility reasons, a smaller test dataset was created, comprising 200 randomly selected queries. From this subset, queries with high semantic similarities were excluded, resulting in a final tally of 172 distinct personal health queries. We manually ensured that the sampled subset of queries covered all the query types listed in Table 1. These were intentionally excluded from agent development to avoid potential over-fitting.

Synthetic wearable user data

Definition

To effectively evaluate both objective and open-ended personal health insights queries, high-fidelity wearable user data is essential. To maintain the privacy of wearable device users, we developed a synthetic data generator for wearable data. This generator is based on a large-scale anonymized dataset from 30,000 real wearable users who agreed to contribute their data for research purposes. Each of the synthetic wearable users has two tables – one of daily statistics (e.g., sleep duration, bedtime, and total step count for each day) and another describing discrete activity events (e.g., a 5 km run on 2/4/24 at 1:00 PM). The schema of these tables are available in Supplementary Tables 6 and 7. Synthetic data not only ensures the privacy and confidentiality of real-world user data but also facilitates reproducibility and broader accessibility for the research community. Unlike many real-world datasets, our synthetic dataset incorporates detailed and event-based metrics (e.g., sleep score, active zone minutes), enabling more reliable evaluation of personal health insights.

Dataset curation

To build the training dataset for our synthetic data generation framework, we sampled wearable data from 30,000 users who were randomly selected from individuals with heart rate-enabled Google Fitbit and Google Pixel Watch devices. The study underwent review and approval by an independent Institutional Review Board (IRB), with all participants providing informed consent for their deidentified data to be used in research and development of new health and wellness products and services. Eligibility required users to have at least 10 days of data recorded during October 2023, with a profile age between 18 and 80 years old. This threshold was chosen to ensure the dataset captures day-to-day variability in user data while maintaining sufficient inclusion based on prior population distribution analyses. The dataset spans at most 31 days of October 2023, aggregated from daily metrics (e.g., steps, sleep minutes, heart rate variability, activity zone minutes) and exercise events listed in Supplementary Tables 6 and 7.

We used a Conditional Probabilistic Auto-Regressive (CPAR) neural network50,51, specifically designed to manage sequential multivariate and multi-sequence data, while integrating stable contextual attributes (age, weight, and gender). This approach distinguishes between unchanging context (i.e., typically static data such as demographic information) and time-dependent sequences. Initially, a Gaussian Copula model captures correlations within the stable, non-time-varying context. Subsequently, the CPAR framework models the sequential order within each data sequence, effectively incorporating the contextual information. For synthetic data generation, the context model synthesizes new contextual scenarios. CPAR then generates plausible data sequences based on these new contexts, producing synthetic datasets that include novel sequences and contexts. To further enhance the fidelity of the synthetic data, we incorporated patterns of missing data observed in the real-world dataset, ensuring that the synthetic data mirrors the sporadic and varied availability of data often encountered in the usage of wearable devices. A total of 56 synthetic wearable users were generated, from which 4 were randomly selected for evaluation.

The Personal Health Insights Agent (PHIA)

Language models in isolation demonstrate limited abilities to plan future actions and use tools52,53. To support advanced wearable data analysis, as Fig. 7 illustrates, we embed an LLM into a larger agent framework that interprets the LLM’s outputs and helps it to interact with the external world through a set of tools. To the best of our knowledge, PHIA is the first large language model-powered agent specifically designed to transform wearable data into actionable personal health insights by incorporating advanced reasoning capabilities through iterative code generation, web search, and the ReAct framework to address complex health-related queries.

a–c Examples of objective and open-ended health insight queries, along with the synthetic wearable user data, were utilized to evaluate PHIA’s capabilities in reasoning and understanding personal health insights. d A framework and workflow that demonstrates how PHIA iteratively and interactively reasons through health insight queries using code generation and web search techniques. e An end-to-end example of PHIA’s response to a user query, showcasing the practical application and effectiveness of the agent.

Iterative & interactive reasoning

PHIA is based on the widely recognized ReAct agent framework34, where an “agent” refers to a system capable of performing actions autonomously and incorporating observations about these actions into decisions (Fig. 7d). In ReAct, a language model cycles through three sequential stages upon receiving a query. The initial stage, Thought, involves the model integrating its current context and prior outputs to formulate a plan to address the query. Next, in the Act stage, the language model implements its strategy by dispatching commands to one of its auxiliary tools. These tools, operating under the control of the LLM, provide feedback to the agent’s state by executing specific tasks. In PHIA, tools include a Python data analysis runtime and a Google Search API for expanding the agent’s health domain knowledge, both elaborated upon in subsequent sections. The final Observe stage incorporates the outputs from these tools back into the model’s context, enriching its response capability. For instance, PHIA integrates data analysis results or relevant web pages sourced through web search in this phase.

Wearable data analysis with code generation

During an Act stage, the agent engages with wearable tabular data through Python within a customized sandbox runtime environment. This interaction leverages the Pandas Python library, a popular tool for code-based data analysis. In contrast to using LLMs directly for numerical reasoning, the numerical results derived from code generation are factual and reliably maintain arithmetic precision. Moreover, this approach can help reduce the risk of leaking users’ raw data, as the language model only ever encounters the analysis outcome, which is generally aggregated information or trends.

Integration of additional health knowledge

PHIA enhances its reasoning processes by integrating a web search-based mechanism to retrieve the latest and relevant health information from reliable sources54,55. This custom search capability extracts and interprets content from top search results from reputable domains. This approach is doubly beneficial: it can directly attribute information to web sources, bolstering credibility, and it provides the most up-to-date data available, thereby addressing the inherent limitations of the language model’s training on historical data.

Mastering tool use

A popular technique for augmenting the performance of agents and language models is few-shot prompting56. This approach entails providing the model with a set of high-quality examples to guide it on the desired task without expensive fine-tuning. To determine representative examples, we computed a sentence-T5 embedding57 for all queries in our dataset. Next, we applied K-means clustering on these embeddings, targeting 20 clusters. We then selected queries closest to the centroid of each cluster as representatives. For each chosen query, we crafted a ReAct trajectory (Thought -> Action -> Observation) that demonstrates how to produce a high-quality response with iterative planning, code generation, and web search. Refer to the responses of PHIA Few-Shot in Supplementary Figs. 5–8 for more examples.

Choice of language model

For all of the following experiments, we fix Gemini 1.0 Ultra58 as the underlying language model. Our goal is not to study which language model is best at our task. Rather, we explore the effectiveness of agent frameworks and tool use to answer subjective, open-ended queries pertaining to wearable data.

Experimental design and evaluation

Baselines

Numerical reasoning

Since language models have modest mathematical ability52,59 it may be the case that PHIA’s code interpreter is not necessary to answer personal health queries. In the first baseline, the user’s data is structured in the popular Markdown table format and directly supplied to the language model as text, coupled with the corresponding query. Markdown has previously been shown to be one of the most effective formats for LLM-aided tabular data processing60. Analogous to PHIA, we designed a set of few-shot examples to guide the model to execute rudimentary operations such as calculating the average of a data column in the last 30 days, as shown in Supplementary Fig. 9.

Code Generation

Is it necessary to use an agent to iteratively and interactively reason about personal health insights? To investigate this question, for our second baseline, we introduce a Code Generation model that can only generate answers in a single step. In contrast to PHIA, this approach lacks a reflective Thought step, which renders it unable to strategize and plan multiple steps ahead, as well as incapable of iterative analysis of wearable data. Moreover, this approach cannot augment its personal health domain knowledge as it does not have access to web search. This baseline builds on prior work in code and SQL generation for data science, where language models generate code in response to natural language queries61,62,63,64. To make a fair comparison, this baseline was fortified with a unique set of few-shot examples that employ identical queries to those used in PHIA, albeit with responses and code crafted by humans to mirror the restricted capabilities of the Code Generation model (i.e., no additional tool use and iterative reasoning). Examples are available in Supplementary Figs. 5–8.

Additional LLM-based wearable systems

We also compare PHIA against recent LLM-based methods, including the Personal Health Large Language Model (PH-LLM)32. It is a fine-tuned LLM based on Gemini Ultra 1.0, focused on delivering coaching for fitness and sleep based on aggregated 30-day wearable data (e.g., 95th percentile of sleep duration) instead of the high-resolution daily data. Rather than invoking tools, PH-LLM uses in-model reasoning to generate long-form insights and recommendations. Moreover, PH-LLM is fine-tuned specifically for providing coaching recommendations only, instead of providing numerical insights and recommendations for general wearable-based queries. Additionally, we compare our approach to a specialized chain-of-thought prompting strategy designed for interpreting time-series wearable data with the GPT-4 model33. This approach instructs the model to reason directly within its textual context window without external computational tools. Overall, unlike PHIA, these methods focus on internal model reasoning and do not incorporate an iterative agentic framework and external tools. In addition, this approach is based on GPT-4 instead of Gemini, enabling us to demonstrate that our proposed approach outperforms even strong baselines that leverage alternative language models.

Experiments

With the aforementioned baselines in mind, we conducted the following experiments to examine PHIA’s capabilities.

Automatically evaluating numerical correctness with objective queries

Some personal health queries have objective solutions that afford automatic evaluation (see “Methods” section for details). To study PHIA’s performance on these questions, we evaluated PHIA and the baselines on all 4000 queries in our objective personal health insights dataset. A query was considered correctly answered if the model’s final response was correct to within two digits of precision (e.g., given a ground truth answer of 2.54, a response of 2.541 would be considered correct, and the response 2.53 would be considered incorrect). We compared PHIA against numerical reasoning, code generation, and two LLM-based wearable systems (PH-LLM and custom-prompted GPT-4).

Evaluating open-ended insights reasoning with human raters

Open-ended personal health queries demand precise interpretation to integrate user-specific data with expert knowledge. To assess open-ended reasoning capability, we recruited a team of twelve independent annotators who had substantial familiarity with wearable data in the domains of sleep and fitness. They were tasked to evaluate the quality of reasoning of PHIA and our Code Generation baseline in the open-ended query dataset defined in Table 1. Due to annotators’ minimal experience with Python data analysis, two domain experts developed a translation pipeline with Gemini Ultra to translate Python code into explanatory English language text (examples available in Supplementary Figs. 10–14). Annotators were also provided with the final model responses.

Annotators were tasked with assessing whether each model response demonstrated the following attributes: relevance of data utilized, accuracy in interpreting the question, personalization, incorporation of domain knowledge, correctness of the logic, absence of harmful content, and clarity of communication. Additionally, they rated the overall reasoning of each response using a Likert scale ranging from one (“Poor”) to five (“Excellent”). All responses were distributed so that each was rated by at least three unique annotators, who were blinded to the method used to generate the response. Rubrics and annotation instructions can be found in Supplementary Table 1. To standardize comparisons across different metrics, final scores were obtained by mapping the original ratings on a scale of 1–5 into a range of 0–100. Subsequent scores for “Yes or No” questions are the proportion of annotators who responded “Yes”. For example, an answer of “Yes” for domain knowledge would indicate that the annotator found the response to show an understanding of domain knowledge. In total, more than 5500 model responses and 600 h of annotator time were used in this evaluation. Additional reasoning evaluation with real-user data can be found in Supplementary Fig. 15.

Evaluating code quality with domain experts

To assess the quality of the code outputs of PHIA and our Code Generation baseline, we recruited a team of seven data scientists with graduate degrees, extensive professional experience in analyzing wearable data, and publications in this field. Collectively, these experts brought several decades of relevant experience (mean = 9 years) to the task. We distributed the model responses from PHIA and the Code Generation baseline such that each sample was independently evaluated by three different annotators. Experts were blinded to the experimental condition (i.e., whether the response was generated by PHIA or Code Generation baseline). Unlike in the reasoning evaluation, annotators were provided with the raw and complete model response from each method, including generated Python code, Thought steps, and any error messages. Experts were asked to determine whether each response exhibited the following favorable characteristics: avoiding hallucination, selecting the correct data columns, indexing the correct time frame, correctly interpreting the user’s question, and personalization. Finally, annotators were instructed to rate the overall quality of each response using a Likert scale ranging from one to five (instruction details in Supplementary Table 2). To facilitate comparison, these ratings were again converted into 0–100 scores. In total, 595 model responses collected over 50 h were used in this evaluation.

Conducting comprehensive errors analysis

Additionally, we conducted a quantitative measurement of code quality by calculating how often a method fails to generate valid code while answering a personal health insights query. Toward this, we determined each method’s “Error Rate” – the number of responses that contain code that raises an error divided by the total number of responses that used code (e.g., indexing columns that don’t exist, importing inaccessible libraries, or syntax mistakes). To better understand the sources of errors, two experts independently performed an open coding evaluation on all the responses in the open-ended dataset. They were instructed to look for errors, including hallucinations, Python code errors, and misinterpretations of the user’s query. Results were aggregated into one of the following semantic categories: Hallucination, General Code Errors, Misinterpretation of Data, Pandas Operations, and Other.

Reporting summary

Further information on research design is available in the Nature Portfolio Reporting Summary linked to this article.