The Google Threat Intelligence Group (GTIG) has released a report detailing a significant shift in cybersecurity, noting that hackers are no longer just using AI for assistance or writing code but are integrating it into malware.

This allows the malware to think, adapt and modify itself autonomously during a hack, marking a new era in cyber warfare, where threats can learn and evade detection on their own.

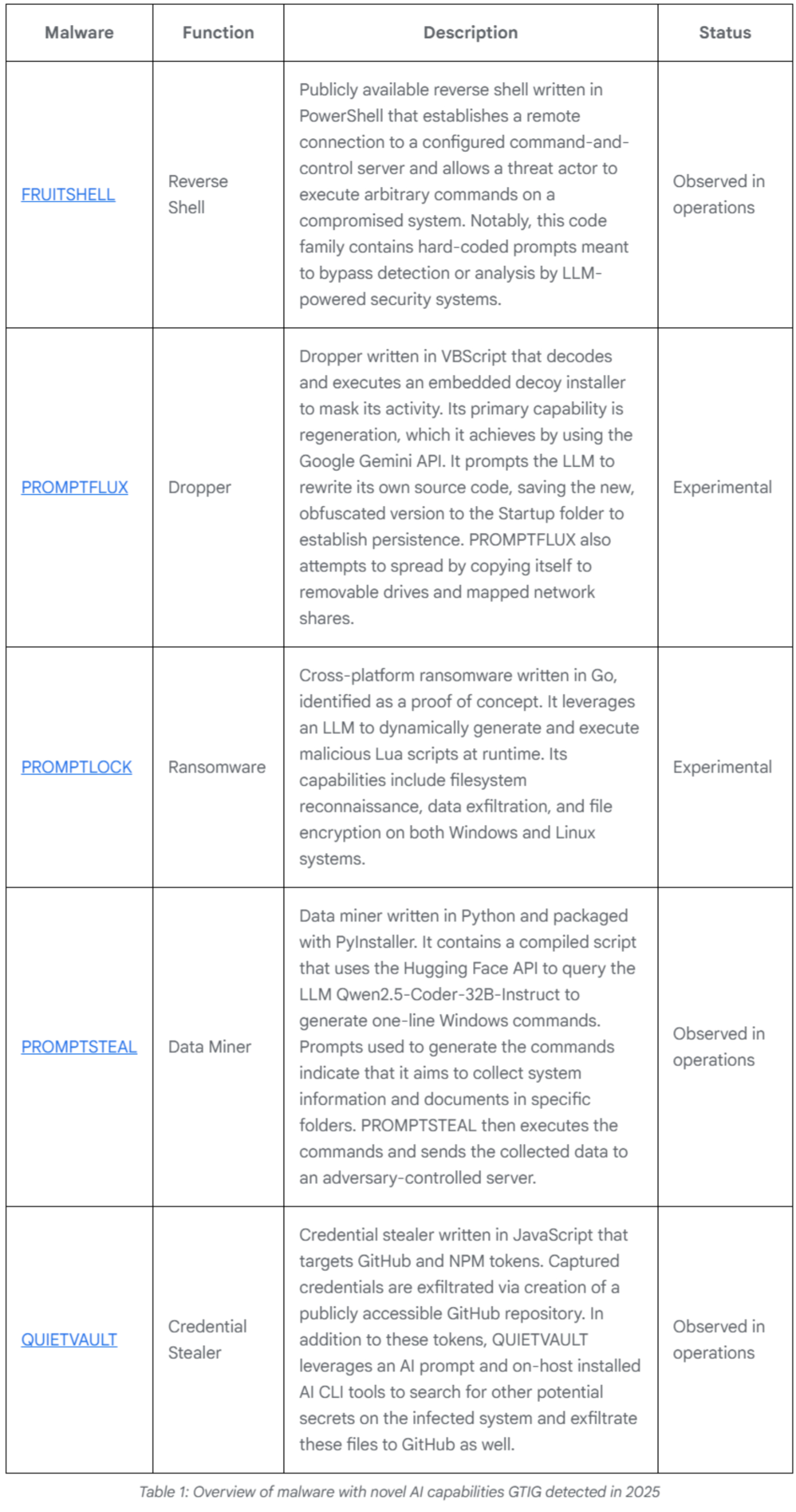

Google has identified specific examples of this new breed of malware, such as PROMPTFLUX and PROMPTSTEAL, which utilise Large Language Models (LLMs). These malware generate new malicious scripts every time they are run. PROMPTFLUX, written in VBScript, can send commands to the Gemini API to request assistance in writing complex, encrypted new code to bypass antivirus software.

Conversely, PROMPTSTEAL, reportedly used by the Russian APT28 group in attacks on Ukraine, disguises itself as an image generation programme and uses the Qwen model to create commands for stealing local data without relying on pre-written code. (continues below graphics)

Photo: cloud.google.com

The report highlights that some hacker groups have begun using sophisticated Social Engineering techniques against AI to trick it into writing malicious code. They use seemingly innocent pretexts, such as pretending to be a Capture-the-Flag contestant to get Gemini to suggest vulnerabilities, or claiming to be a student working on a final project to elicit coding assistance. This demonstrates that hackers are now moving beyond tricking humans and are actively deceiving AI systems.

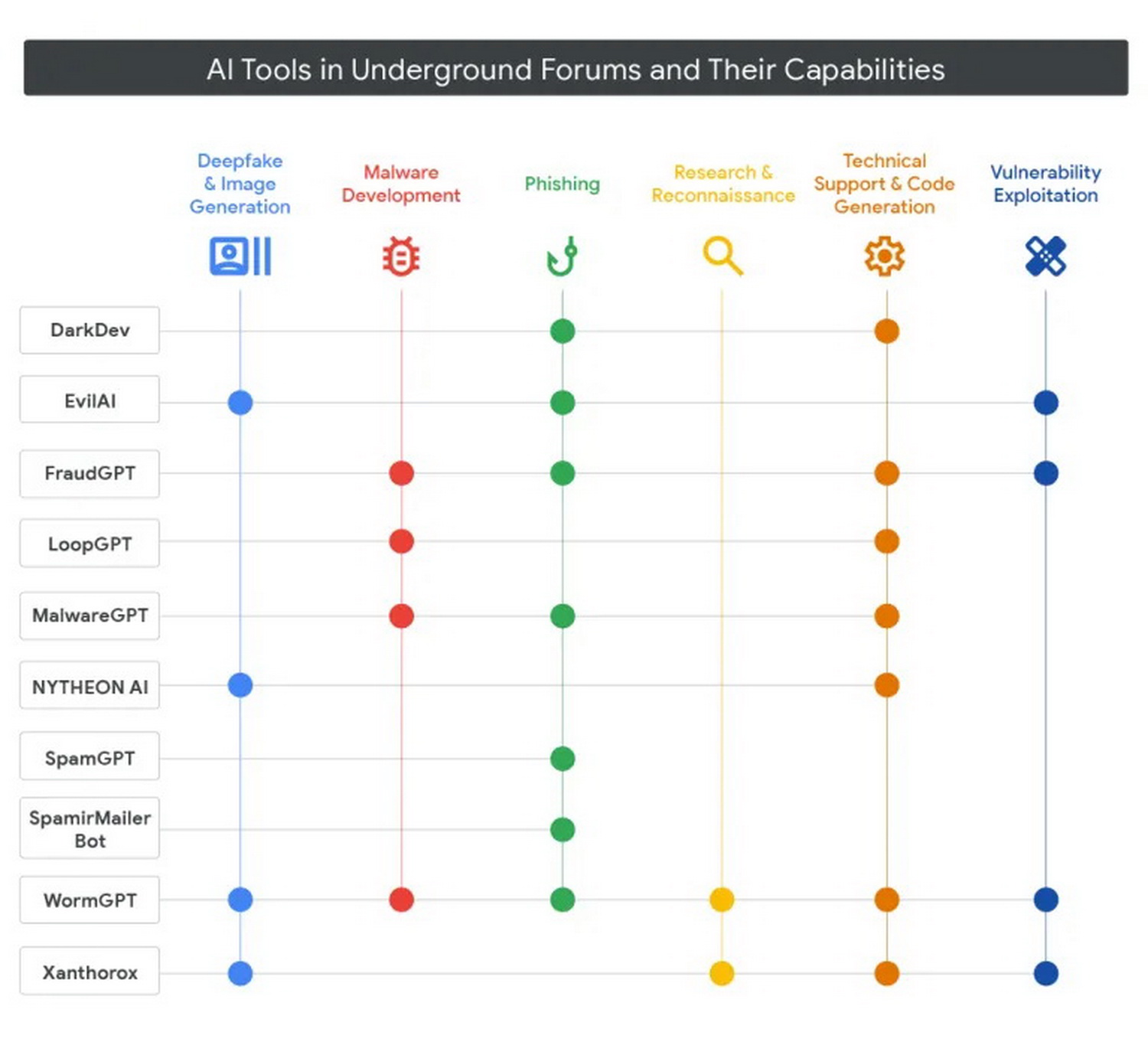

The report further indicates that the black market for AI-powered hacking tools has seen rapid growth in 2025. Services such as WormGPT, FraudGPT and LoopGPT are being sold, offering capabilities that range from writing phishing emails and creating malware to exploiting system vulnerabilities.

Photo: cloud.google.com

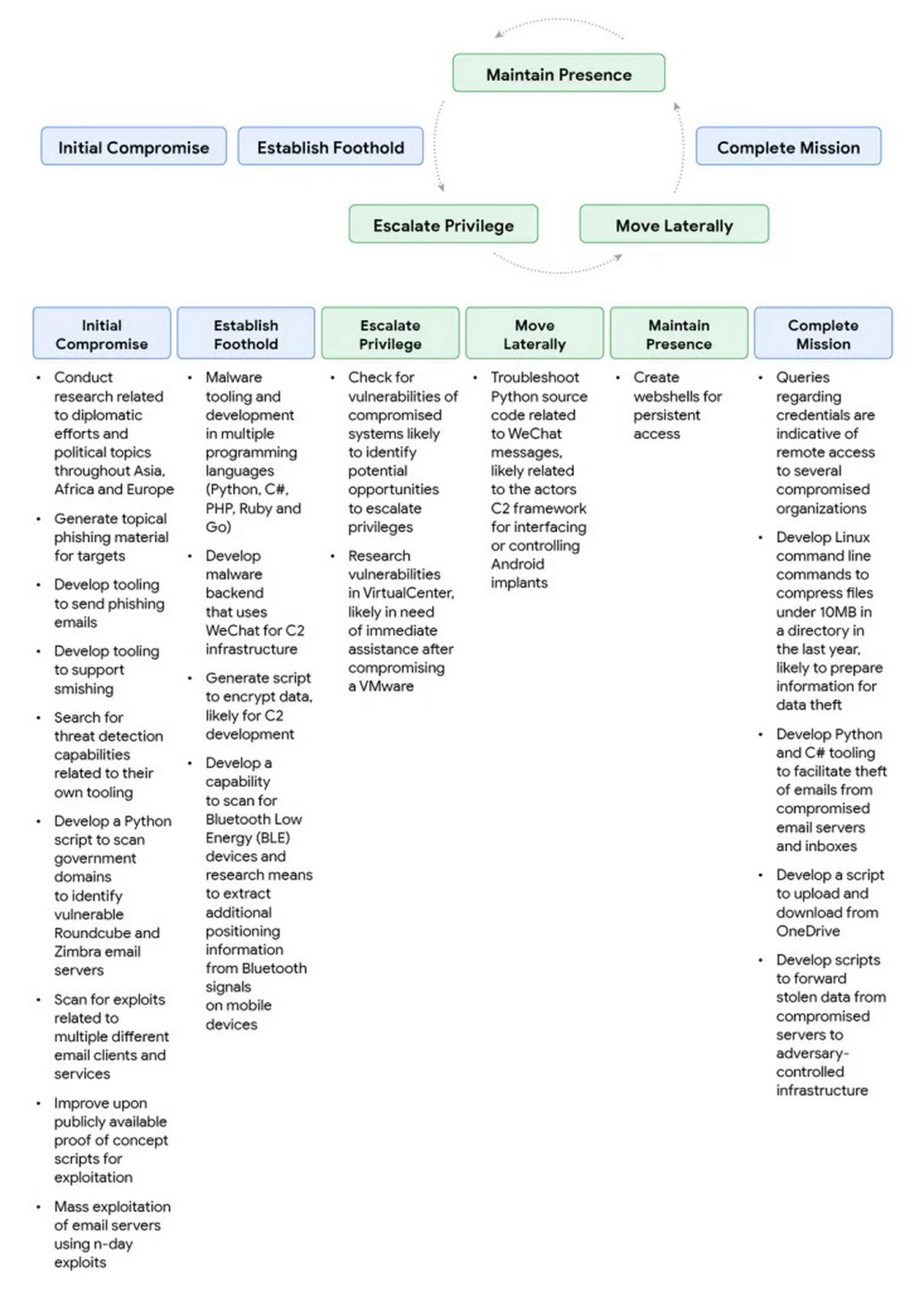

This accessibility makes it easier for even novice hackers to create more complex malware than ever before. Simultaneously, state-sponsored hacker groups are leveraging these AIs for attack planning, information gathering, preparing sophisticated phishing campaigns and developing command-and-control servers for data exfiltration.

In response to this escalating threat, Google has closed accounts and projects associated with malicious actors and is continually refining its Gemini models to be smarter and better equipped to prevent misuse.

Google is also collaborating with the DeepMind team to develop tools such as Big Sleep and CodeMender, which will use AI to automatically detect and patch vulnerabilities. The ultimate goal is to create advanced and safe AI, ensuring humans can use the technology responsibly in an age where AI serves as both a powerful weapon and a crucial shield.

Source: Google

Photo: cloud.google.com