Most email problems don’t arrive with flashing warnings. They arrive quietly: a slightly higher bounce rate, a small dip in opens, a campaign that “should have worked” but didn’t. If you’ve ever tried to debug that feeling, you already understand why teams search for an email validator, ways to check email quality, or a quick method to verify email addresses. What helped me most wasn’t a single trick—it was adopting a governance mindset: treating list quality as a controlled system, not a one-off cleanup.

During a recent list review (a mix of aged signups, partner leads, and historic CRM exports), I ran the addresses through Email verifier as a front-door control. In my testing, it felt less like a promotional “tool” and more like a policy input: it produced the kinds of categories and confidence signals you can actually write rules around—especially when the ecosystem is inherently probabilistic.

Why “Email Validation” Is Really a Risk Management Problem

It’s not only about bad addresses

If validation were only about catching obvious fakes, a simple email address validator (format check) would be enough. But modern deliverability failures often come from gray areas:

- Addresses that once worked but have expired

- Domains configured as catch-all

- Role inboxes that accept mail but behave differently

- Temporary inboxes used by real people who never return

An effective mail checker reduces risk by turning these gray areas into explicit labels you can act on.

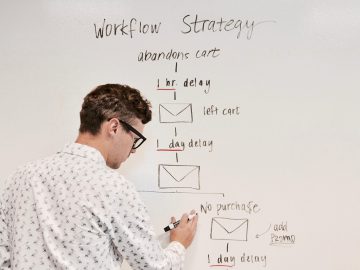

Treat list quality like a control system

In a control system, you don’t wait for failure. You measure early indicators and adjust before the system destabilizes. For email sending, validation is one of those indicators.

A Practical Framework: The Four Decisions You Actually Need to Make

When you check if email exists, you’re not seeking philosophical truth—you’re trying to decide what to do next. In practice, validation supports four operational decisions:

1) Should this address enter the database at all?

Real-time validation at signup prevents long-term data pollution.

2) Should we send to it now?

Pre-send checks are about reputation protection and efficiency.

3) If we send, how should we send?

Risk-tiered sending (clean first, uncertain later) can be safer than all-or-nothing.

4) How should this address be treated going forward?

Store labels and timestamps so the decision is traceable and repeatable.

This is where validation stops being a tool and becomes governance.

What I Look For in an Email Validator Output (Beyond “Valid/Invalid”)

In my experience, the most useful outputs are the ones that let you distinguish between:

- Certain negatives (invalid domains, missing MX, clear nonexistence)

- Certain positives (clean signals, consistent checks)

- Operational uncertainty (unknown, accept-all/catch-all)

- Behavioral risk (disposable, role-based)

That middle zone—uncertainty plus risk—is where teams usually leak performance without realizing it.

The “Before vs After” That Matters: Policy, Not Perfection

Before: reactive hygiene

- Import leads

- Send campaigns

- Remove hard bounces after damage

- Repeat

After: proactive governance

- Validate at entry and before major sends

- Tag addresses by risk category

- Segment sending by risk

- Review outcomes and refine rules

In my tests, the benefit was not “zero bounces.” It was stability: fewer surprises, cleaner analytics, and fewer reputation scares.

Comparison Table: Validation Approaches as Operating Models

| Operating model | What it optimizes | What it ignores | Typical outcome | Best for |

| Format-only email address validator | Speed and simplicity | Domain/MX readiness, mailbox behavior, risk labels | “Looks valid” lists that still bounce | Small forms, low-stakes lists |

| Manual “send and clean later” | Minimal setup | Reputation cost, wasted volume, delayed learning | Reactive churn and noisy metrics | Very small senders, experimentation |

| Generic mail checker (varies) | Basic hygiene | Nuance around uncertainty and policy design | Mixed value depending on signals offered | General use, moderate scale |

| Policy-driven verification workflow | Risk control and repeatability | Assumes some uncertainty is unavoidable | More stable deliverability and decision clarity | Growth teams, sales ops, lifecycle email |

The key difference is governance. If validation output can’t translate into rules, it tends to be ignored.

How to Turn Verification Results into Rules (A Lightweight Policy)

Below is a simple set of rules that worked well for me as a starting point. You can adjust based on your tolerance for risk.

Rule set A: Entry validation (signup / lead capture)

- Disposable: require confirmation or reject

- Role-based: allow with warning (B2B) or route to secondary confirmation

- Unknown: allow but tag for re-check

- Clean signals: accept

Rule set B: Pre-send validation (campaign / newsletter / outbound)

- Invalid: suppress

- Clean: send normally

- Accept-all/catch-all: send in smaller batch; monitor bounces and engagement

- Unknown: retry later or treat as “hold” if volume is high-risk

This approach is intentionally conservative without being rigid.

Limitations You Should Expect (And Design Around)

A realistic email validator won’t promise effortless certainty, because the ecosystem doesn’t always allow it.

1) Catch-all domains are structurally ambiguous

Some domains accept any recipient. That means you can’t reliably prove a mailbox exists, even when the domain is legitimate.

2) SMTP behavior can be intentionally opaque

Servers may rate-limit, mask responses, or behave differently depending on timing and volume. “Unknown” is often an honest result, not a failure.

3) Data quality sets the ceiling

If the list source is low-trust (scraped, purchased, heavily outdated), validation will surface more invalid/uncertain outcomes. That’s the system telling you the input is risky.

A Neutral Reference for Why “Mailbox Existence” Isn’t Always Deterministic

If you want non-marketing context: email routing and message handling are governed by standards commonly referenced as SMTP and message format RFCs (e.g., RFC 5321 and RFC 5322). They help explain why servers can behave in ways that prevent deterministic proof of inbox existence—and why practical verification is often probabilistic.

A Simple Way to Start Without Overhauling Your Stack

If you want a low-effort experiment that still produces real insight:

- Take your next campaign segment (not your entire database).

- Validate and tag results.

- Send in two waves:

- Wave 1: clean segment

- Wave 2: accept-all/catch-all segment (smaller volume)

- Wave 1: clean segment

- Compare bounces and engagement.

- Store the tags and the validation date for future governance.

If the second wave behaves meaningfully worse, you’ve just validated the value of policy—without needing a full rebuild.

FAQ: The Questions People Usually Mean When They Search These Keywords

“Email validator” — what should I expect it to do?

At minimum: format, domain, and MX checks. For stronger decision-making, you also want risk labels and a way to handle uncertainty.

“Check email” — is format enough?

Format is necessary but rarely sufficient. Many addresses look correct and still cannot receive mail.

“Verify email” — can it guarantee inbox placement?

No. Inbox placement depends on sender reputation, authentication, content, and recipient behavior. Verification reduces one major class of failures: bad addresses and high-risk patterns.

“Check if email exists” — why is the answer sometimes ‘unknown’?

Because some servers are designed not to reveal mailbox validity, and catch-all configurations make individual inbox existence ambiguous. A responsible system reports uncertainty rather than guessing.